We use essential cookies to make our site work. With your consent, we may also use non-essential cookies to improve user experience and analyze website traffic…

DeepInfra raises $107M Series B to scale the inference cloud — read the announcement

Did you just finetune your favorite model and are wondering where to run it? Well, we have you covered. Simple API and predictable pricing.

Put your model on huggingface

Use a private repo, if you wish, we don't mind. Create a hf access token just for the repo for better security.

Create custom deployment

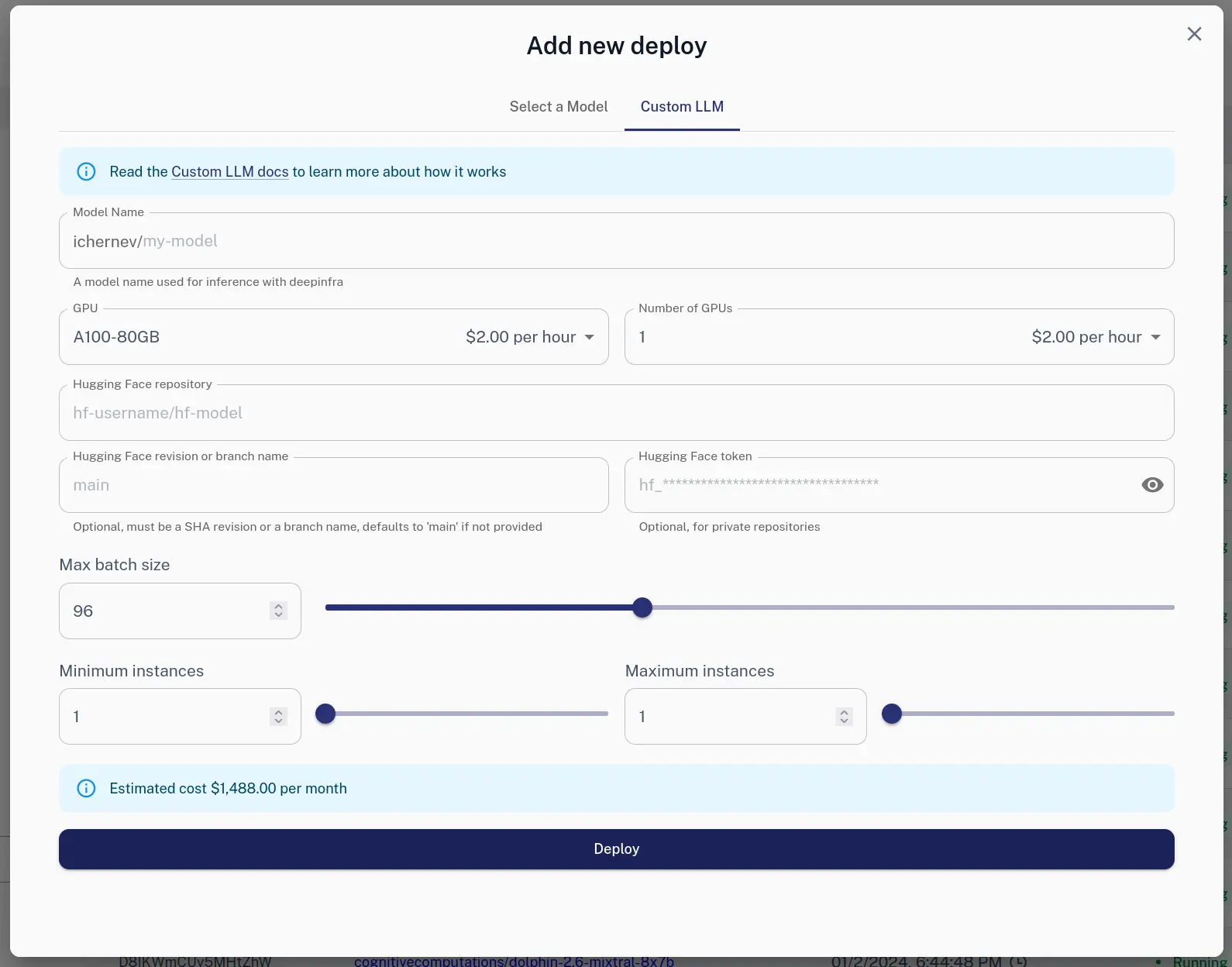

Via Web

You can use the Web UI to create a new deployment.

Via HTTP

We also offer HTTP API:

curl -X POST https://api.deepinfra.com/deploy/llm -d '{

"model_name": "test-model",

"gpu": "A100-80GB",

"num_gpus": 2,

"max_batch_size": 64,

"hf": {

"repo": "meta-llama/Llama-2-7b-chat-hf"

},

"settings": {

"min_instances": 1,

"max_instances": 1,

}

}' -H 'Content-Type: application/json' \

-H "Authorization: Bearer YOUR_API_KEY"

Use it

curl -X POST \

-d '{"input": "Hello"}' \

-H 'Content-Type: application/json' \

-H "Authorization: Bearer YOUR_API_KEY" \

'https://api.deepinfra.com/v1/inference/github-username/di-model-name'

For in depth tutorial check Custom LLM Docs.

Related articles Build an OCR-Powered PDF Reader & Summarizer with DeepInfra (Kimi K2)<p>This guide walks you from zero to working: you’ll learn what OCR is (and why PDFs can be tricky), how to turn any PDF—including those with screenshots of tables—into text, and how to let an LLM do the heavy lifting to clean OCR noise, reconstruct tables, and summarize the document. We’ll use DeepInfra’s OpenAI-compatible API […]</p>

Build an OCR-Powered PDF Reader & Summarizer with DeepInfra (Kimi K2)<p>This guide walks you from zero to working: you’ll learn what OCR is (and why PDFs can be tricky), how to turn any PDF—including those with screenshots of tables—into text, and how to let an LLM do the heavy lifting to clean OCR noise, reconstruct tables, and summarize the document. We’ll use DeepInfra’s OpenAI-compatible API […]</p>

Model Distillation Making AI Models EfficientAI Model Distillation Definition & Methodology

Model distillation is the art of teaching a smaller, simpler model to perform as well as a larger one. It's like training an apprentice to take over a master's work—streamlining operations with comparable performance . If you're struggling with depl...

Model Distillation Making AI Models EfficientAI Model Distillation Definition & Methodology

Model distillation is the art of teaching a smaller, simpler model to perform as well as a larger one. It's like training an apprentice to take over a master's work—streamlining operations with comparable performance . If you're struggling with depl... From Precision to Quantization: A Practical Guide to Faster, Cheaper LLMs<p>Large language models live and die by numbers—literally trillions of them. How finely we store those numbers (their precision) determines how much memory a model needs, how fast it runs, and sometimes how good its answers are. This article walks from the basics to the deep end: we’ll start with how computers even store a […]</p>

From Precision to Quantization: A Practical Guide to Faster, Cheaper LLMs<p>Large language models live and die by numbers—literally trillions of them. How finely we store those numbers (their precision) determines how much memory a model needs, how fast it runs, and sometimes how good its answers are. This article walks from the basics to the deep end: we’ll start with how computers even store a […]</p>

Build an OCR-Powered PDF Reader & Summarizer with DeepInfra (Kimi K2)<p>This guide walks you from zero to working: you’ll learn what OCR is (and why PDFs can be tricky), how to turn any PDF—including those with screenshots of tables—into text, and how to let an LLM do the heavy lifting to clean OCR noise, reconstruct tables, and summarize the document. We’ll use DeepInfra’s OpenAI-compatible API […]</p>

Build an OCR-Powered PDF Reader & Summarizer with DeepInfra (Kimi K2)<p>This guide walks you from zero to working: you’ll learn what OCR is (and why PDFs can be tricky), how to turn any PDF—including those with screenshots of tables—into text, and how to let an LLM do the heavy lifting to clean OCR noise, reconstruct tables, and summarize the document. We’ll use DeepInfra’s OpenAI-compatible API […]</p>

Model Distillation Making AI Models EfficientAI Model Distillation Definition & Methodology

Model distillation is the art of teaching a smaller, simpler model to perform as well as a larger one. It's like training an apprentice to take over a master's work—streamlining operations with comparable performance . If you're struggling with depl...

Model Distillation Making AI Models EfficientAI Model Distillation Definition & Methodology

Model distillation is the art of teaching a smaller, simpler model to perform as well as a larger one. It's like training an apprentice to take over a master's work—streamlining operations with comparable performance . If you're struggling with depl... From Precision to Quantization: A Practical Guide to Faster, Cheaper LLMs<p>Large language models live and die by numbers—literally trillions of them. How finely we store those numbers (their precision) determines how much memory a model needs, how fast it runs, and sometimes how good its answers are. This article walks from the basics to the deep end: we’ll start with how computers even store a […]</p>

From Precision to Quantization: A Practical Guide to Faster, Cheaper LLMs<p>Large language models live and die by numbers—literally trillions of them. How finely we store those numbers (their precision) determines how much memory a model needs, how fast it runs, and sometimes how good its answers are. This article walks from the basics to the deep end: we’ll start with how computers even store a […]</p>

© 2026 DeepInfra. All rights reserved.